Hierarchical Clustering

What you learn in Hierarchical Clustering ?

About this Free Certificate Course

Clustering is a very important part of machine learning that governs many applications that we have today. It is a concept that is widely used especially when we require the use of unsupervised learning techniques. Hierarchical clustering talks about how we could go on to pick up multiple data points and combine or separate them into individual clusters containing similar characteristics. Since it is very important for all you machine learning enthusiasts to understand this in detail, we here at Great Learning have come up with this course to help you get started with hierarchical clustering and to understand it completely.

Course Outline

With this course, you get

Free lifetime access

Learn anytime, anywhere

Completion Certificate

Stand out to your professional network

1.0 Hours

of self-paced video lectures

Frequently Asked Questions

What is hierarchical clustering and how does it work?

Definition- Hierarchical clustering is also known as HCA or hierarchical cluster analysis. Hierarchical clustering is associated with data mining and statistics, which means that it is an algorithm that groups all the similar types of objects, and then it groups these objects, and is called clusters. Hierarchical cluster analysis is a method of cluster analysis in which a hierarchy of clusters is being built up. The best part of hierarchical clustering is the endpoint, as the endpoint is the set of clusters in which each cluster is different and distinct from each other, but the objects present inside these clusters are similar and same to each other.

Working of hierarchical clustering-

The best part of hierarchical clustering is the way it works. Hierarchical clustering first starts working by taking each observation as a distinct and separate cluster. After this step, it repeats the following steps:

Step 1- It identifies two clusters that are nearest and closest to each other.

Step 2- It operates by merging two similar kinds of clusters.

This process keeps on going until all the clusters are merged together.

What is hierarchical clustering used for?

The hierarchical clustering method is mainly used for analyzing the social network of the data. In this method, clusters are compared with each other based on similarity.

How do you interpret hierarchical clustering?

The main point of interpretation of hierarchical clustering is to analyze the clusters and then join them together.

Will I get a certificate after completing this Hierarchical Clustering free course?

Yes, you will get a certificate of completion for Hierarchical Clustering after completing all the modules and cracking the assessment. The assessment tests your knowledge of the subject and badges your skills.

How much does this Hierarchical Clustering course cost?

It is an entirely free course from Great Learning Academy. Anyone interested in learning the basics of Hierarchical Clustering can get started with this course.

Success stories

Can Great Learning Academy courses help your career? Our learners tell us how.And thousands more such success stories..

Related Machine Learning Courses

Popular Upskilling Programs

Explore new and trending free online courses

Relevant Career Paths >

Hierarchical Clustering

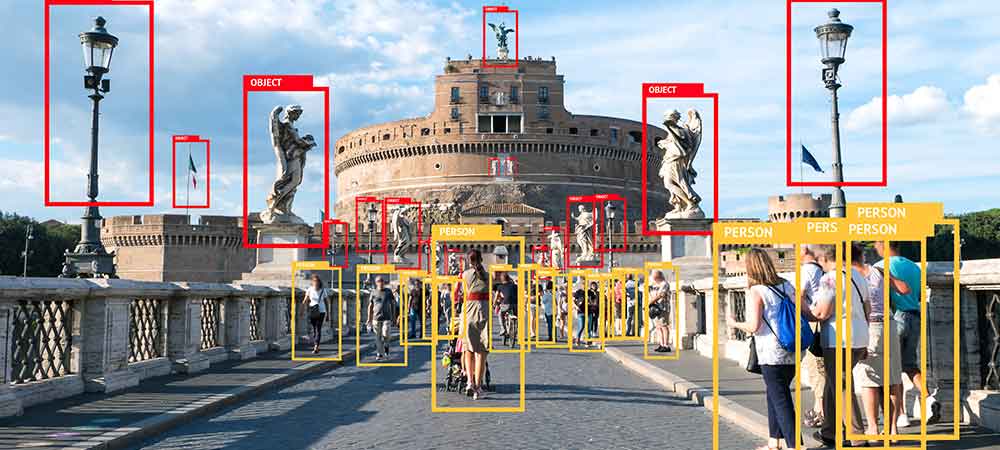

Hierarchical clustering is also known as hierarchical cluster analysis or HCA. This hierarchical clustering is generally used in data mining and statistics. Hierarchical clustering is the method of performing analysis of the clusters and then building the hierarchy of clusters.

Hierarchical clustering is the method that works for the grouping of data from the tree of clusters. Hierarchical clustering treats each data as a separate cluster and then groups similar clusters into one group.

The best part of hierarchical clustering is the endpoint, as the endpoint is the set of clusters in which each cluster is different and distinct from each other, but the objects present inside these clusters are similar and the same to each other

Working of hierarchical clustering-

The best part of hierarchical clustering is the way it works. Hierarchical clustering first starts working by taking each observation as a distinct and separate cluster. After this step, it repeats two steps which are as follows-

-

Step 1 - It identifies two clusters that are nearest and closest to each other.

-

Step 2 - It operates by merging two similar kinds of clusters.

This process keeps on going until all the clusters are merged together.

The diagram which we use for hierarchical clustering is Dendrogram. A dendrogram is a tree-like structure that keeps the statistics of sequences of mergers and splits. Dendrogram graphically represents the hierarchy as well as the inverted tree which helps in describing the order of factors merged when it has the bottom-up view, and the clusters which are present are broken up into top-down view.

The basic aim of hierarchical clustering is to produce a hierarchical series of nested clusters.

Importance of using hierarchical clustering

Hierarchical clustering is the most popular as well as widely used clustering method, which is being used to analyze social network data. And in this method, the nodes are being compared with one another based on their similarity. Hierarchical clustering is the most powerful method or technique that helps you and allows you to build tree structures from data similarities.

In technical terms, if we define hierarchical clustering, then it is the hierarchical decomposition of the data based on group similarity.

Methods to generate Hierarchical Clustering

There are two methods of generating hierarchical clustering which is as follows-

1) Agglomerative Hierarchical Clustering

2) Divisive Hierarchical Clustering

Agglomerative Hierarchical Clustering

At the start, every data point is considered as an individual cluster, and at every step, these individual clusters are being merged with the nearest pairs of the cluster. This method is called the bottom-up method.

The approach which we take into consideration while performing Agglomerative Hierarchical clustering is as follows-

-

Firstly, calculate the similarity of one cluster and compare this similarity with the other clusters, which means you have to calculate the proximity matrix.

-

Secondly, you have to consider every data point as an individual cluster.

-

Thirdly, you have to merge the clusters which are highly similar to each other.

-

Then you have to calculate the proximity matrix for each cluster.

-

Lastly, steps 3 and 4 will keep on repeating until we get a single cluster in the end.

Divisive Hierarchical Clustering

Divisive hierarchical clustering is just the opposite of Agglomerative hierarchical clustering. The steps which we follow in Divisive hierarchical clustering is given below-

-

Firstly, we will consider all the data points.

-

Secondly, these considered data points are taken as a single cluster.

-

Thirdly, in every iteration, we keep on comparing these clusters and start separating the data points from the cluster. The data points which are being separated are those which are not comparable to each other.

-

Lastly, at the end of divisive hierarchical clustering are left with N clusters.

Data required for hierarchical clustering

The data on which hierarchical clustering can be performed can be either a distance matrix or raw data. When the raw data is given to the user, which will be entered by the user in the software, the software will automatically compute the distance matrix in the background.

Strengths of Hierarchical Clustering

The main strength of hierarchical clustering is that it is easy to do and easy to understand. In hierarchical clustering, there are four types of clustering algorithms which are as follows-

-

Hierarchical clustering

-

K-means cluster analysis

-

Latent class analysis

-

Self-organizing maps.

The math of hierarchical clustering is the easiest of all to understand as compared to other clustering algorithms because it is straightforward to program. The main output of hierarchical clustering is the dendrogram, and it is the best output amongst all the above-given algorithms.

Weaknesses of Hierarchical Clustering-

There are some of the biggest weaknesses of hierarchical clustering is as follows-

-

They do not work with the missing data.

-

It involves lots of arbitrary decisions. It is very important and necessary while using hierarchical clustering that you need to specify both the distance metric and the linkage criteria.

-

It works very poorly with the mixed data types. The reason for this is that it is very difficult to determine how you can compute a distant matrix. And there is no straightforward formula that can calculate the distance where the variables are both numeric and qualitative.

-

It does not work well on very large data sets, and because of this, the output of the hierarchical clustering, which is dendrogram, is misinterpreted most of the time.

-

One of the biggest drawbacks of using hierarchical clustering is that it rarely provides you with the best solution.

To overcome these drawbacks, there are much better and more modern alternatives available, and one of them is latent class analysis in order to address all the issues which are related to hierarchical cluster analysis.

Applications of Hierarchical Clustering-

There are many applications of hierarchical clustering, which are as follows-

-

US Senator clustering through Twitter.

-

Charting Evolution through Phylogenetic trees.

-

Tracking Viruses through Phylogenetic trees.

Conclusion

Hierarchical clustering is the most powerful and efficient method that helps you and allows you to build tree structures from data similarities. There are benefits and drawbacks of using hierarchical clustering, but when it comes to segmentation, then hierarchical clustering is one of the best and useful ways. It works very well when the number of clusters is not defined, but it is also true that it doesn't work well when a huge amount of data is present.

.jpg)